Github Self-Hosted Runners on GKE: A Comprehensive Guide

In this blog, we’ll go through the Github self-hosted runners and how to use them with Google Kubernetes Engine (GKE). We will also go over the Terraform scripts that will help us in implementing self-hosted runners.

What are GitHub Actions runners?

Runners are responsible for executing the job that is assigned to them by Github Actions workflow. Runners are classified into two types:

Github-hosted runners – To run your workflows, GitHub offers virtual machines running Linux, Windows, and macOS. Github hosts these virtual machines in the cloud.

Self-hosted runners – You can use your own infrastructure(in your data center or cloud) to host your self-hosted runners.

This blog discusses self-hosted runners, which offer greater control over hardware, operating system, and software tools compared to GitHub runners. They enable users to create unique hardware configurations, install local applications, and select an operating system not supported by GitHub-hosted runners.

Actions Runner Controller (ARC)

Actions Runner Controller (ARC) is a K8s controller that is used to create self-hosted runners on your Kubernetes cluster. It simplifies the use of self-hosted runners on GKE clusters. You can set up self-hosted runners that scale up and down based on demand with a few commands. And, because they can be ephemeral and container-based, new instances of the runner can be created quickly and cleanly.

Deploying ARC on a GKE Cluster

There are multiple resources that need to be created as a part of ARC deployment, namely, HELM charts, Kubernetes manifests and other infrastructure components in the GCP environment. Manually creating these resources can be time-consuming and error-prone. To tackle this problem, we use Terraform to efficiently deploy all of the resources. Furthermore, ARC deployment makes use of public docker images, which may worry some enterprise-level clients. As a result, we also use Terraform to obtain the necessary images from DockerHub, then push those images into Artifact Registry and use those images for building Kubernetes resources.

The terraform scripts can be found in this repository.

Runner Deployment

This is a sample runner deployment manifest file that launches a self-hosted runner with name example-runnerdeploy:

kind: RunnerDeployment indicates its a kind of custom resource RunnerDeployment.

replicas: 1 will deploy one replica. You can change the number of replicas by modifying this parameter

repository: mumoshu/actions-runner-controller-ci is the repository to link to when the pod comes up with the Actions runner

ARC produces one pod example-runnerdeploy with two containers: runner and docker when this configuration is used. The github runner component is installed in the runner container, while docker is installed in the docker container.

Note – You don’t need to manually deploy this kubernetes manifest. The terraform scripts deploys all the resources for you. You only need to provide all the information via a .tfvars file. More details on how to use these scripts can be found in the next section.

Customizing The Runner Deployment

You can build a custom container image for the runner if you want to run specific workloads in the self hosted runners. To do so, create a Dockerfile that uses the summerwind/actions-runner image as the base image and then install the extra dependencies directly in the docker image as follows:

As a sample, we have already provided a Dockerfile that installs Terraform, Google Cloud SDK, Python and kubectl in the above repository.

Scaling The Runner Deployment

ARC also allows for scaling the runners dynamically. There are two mechanisms for dynamic scaling – (1) Webhook driven scaling and (2) Pull Driven scaling. We will be focusing on the Pull Driven scaling model.

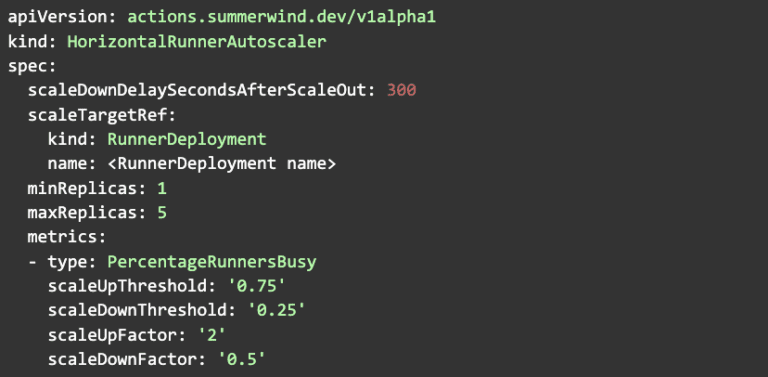

You can enable autoscaling by creating a kubernetes object of type HorizontalRunnerAutoscaler. The minReplicas and maxReplicas indicate the min and max number of replicas to scale to. ARC currently supports PercentageRunnersBusy as a metric type. The PercentageRunnersBusy function polls GitHub to determine the number of runners in the busy state in the namespace of the RunnerDeployment. It then scales based on the scaling factors that you have defined. Here is a simplified version of HorizontalRunnerAutoscaler file that has been provided along with the Terraform scripts:

How To Use The Terraform Scripts

The Terraform scripts are responsible for providing end to end deployment of the ARC setup. But there are some steps that you must perform before running the scripts. Later on in this section, we shall go into greater detail regarding those. First, let us try to understand the modules present in the repo and the resources that it creates:

1. Custom-images module: This module is responsible for creating a repository in the Artifact Registry, pulling all the latest versions of public docker images that are being used while deploying the ARC and then pushing those to the Artifact Registry repository. Optionally, this module also allows you to create a custom version of the Runner Deployment image that we saw in detail in the previous section.

2. Gh-actions-controller module: This module is responsible for setting up the GKE nodepool into which the self-hosted runners will be deployed, a custom service account that will be attached to the nodepool, Workload Identity Federation and HELM charts that are required for setting up the ARC.

This code assumes you already have a GKE cluster up and running with Workload Identity enabled at cluster level, hence it does not create a new GKE cluster. It only adds a new node pool to the existing GKE cluster. Information about the existing GKE cluster can be passed to the Terraform scripts via the .tfvars file as discussed in the following steps.

Steps for executing the scripts:

1. Clone the repository

2. Create a Github Personal Access Token(PAT). Personal Access Tokens can be used to register a self-hosted runner by actions-runner-controller. Create a Personal Access Token by following the steps below:

-

-

- Login to your GitHub account, locate the “Create new Token.” button

- Select repo.

- Click Generate Token and then copy the token locally ( we’ll need it in the next step).

-

3. Create a secret in Secret Manager with the GitHub PAT that we created in the previous step as its value.

4. Optional – Go to the Dockerfile that is present in the modules/custom-image directory. Modify it according to your requirements.

5. Update the values of variables in the .tfvars file. You can refer to the README file for descriptions about the variables.

6. Execute the Terraform scripts using the following commands-

-

-

- terraform init

- terraform plan

- terraform apply –target=module.docker_image –target=module.gh_actions_controller

- terraform apply

-

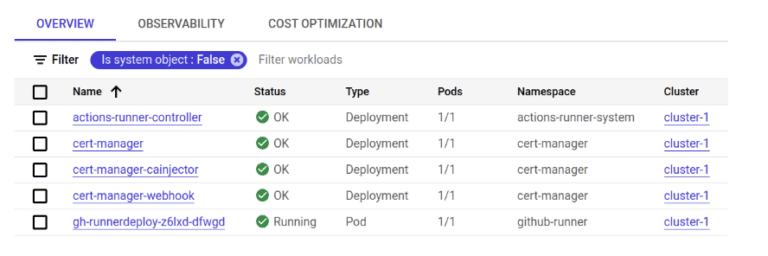

After successfully executing the terraform scripts, you should see the following workloads deployed on GKE cluster:

Go to the Settings → Actions → Runners. You’ll see the registered runners. The status column indicates whether any pipelines are running inside the runner.

Now that the infrastructure for ARC has been set up, you can start deploying workloads into these pods. We need to have a way to inform the GitHub actions pipeline that it needs to run on the registered self hosted runners instead of GitHub runners. To do so, you need to add the runs-on: [self-hosted] parameter to your pipeline. Here is a minimal sample pipeline that uses this parameter:

The self-hosted label is applied to all self-hosted runners. Using only this label will result in the selection of any self-hosted runner. To pick runners that fit specific requirements, such as operating system, supply an array of labels beginning with self-hosted and then including further labels as needed. Jobs will be queued on runners that have all of the labels that you supply. In the above example, we’ve added a custom label called gke-runner to make sure that the job gets deployed on runners that have this label attached to itself. Hence, when we’re creating the RunnerDeployment resource, we need to make sure that we are passing the same custom label there as well. Here’s a minimal version of the RunnerDeployment file for the same:

Runner Groups for GitHub Organization (optional)

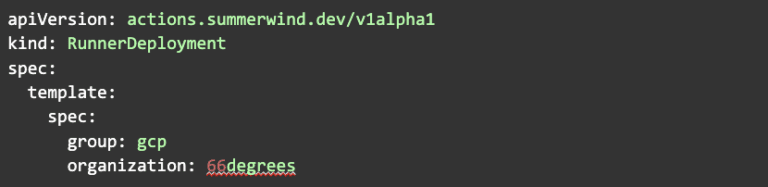

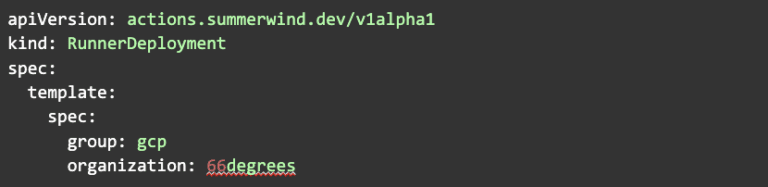

We’ve seen how to add runners to a Github repository in the above sections. But it is also possible to add runners at Organization level. You can then use runner groups to limit which repositories are able to use the GitHub Runner at an organization level. Runner groups have to be created in GitHub first before they can be referenced.

Below is a minimal version of the RunnerDeployment resource that specifies the organization and runner group:

While working with Organization Runners, make sure that the GitHub Personal Access Token(PAT) you create has the following scopes:

- repo (Full control)

- admin:org (Full control)

- admin:public_key (read:public_key)

- admin:repo_hook (read:repo_hook)

- admin:org_hook (Full control)

- notifications (Full control)

- workflow (Full control)

Conclusion

In this blog, we covered the concepts and implementation of self hosted runners on Google Kubernetes Engine(GKE) using Actions Runner Controller(ARC). Using self-hosted runners, one can have more control of hardware, operating system, and software tools than what GitHub-hosted runners provide. We also saw how we can deploy the ARC on GKE clusters using Terraform.

If you are interested in learning more about GKE or how 66degrees can help with your modernization initiatives please don’t hesitate to get in touch.